The mobility characteristics are subsequently introduced for the learning environments as well, merging another important feature for online long-distance and traditional face-to-face education. This way, with multimedia and online tools available in the Internet, it was possible to combine multiple approaches to learning, mixing the use of virtual and physical resources for what is generally defined as Blended learning (B-learning). Information and communication systems features (as multimedia and mostly the Internet) are employed for making available lessons and lectures, improving experiences of exchange and interactivity among educators and students. In this sense, real-time tools have become an important demand for E-learning and, consequently, nowadays long-distance courses feature mainly in online environments. Intending to cover this gap, models based on computer-supported collaborative learning were proposed, encouraging the shared development of knowledge and, therefore, lifelong learning. Although they were electronically supported, those models lack interactivity for students and educators, as interaction is superficially or not approached at all.

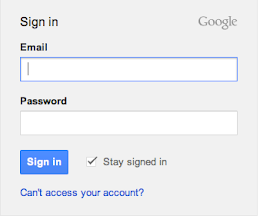

But with the advent of computer systems, early E-learning models have been presented mostly as computer-based learning, automating old education and teaching styles, whereby the role model was for transmitting information and knowledge. A user study with 14 blind participants comparing keyboard-based screen readers with NVMouse, showed that the latter significantly reduced both the task-completion times and user effort (i.e., number of user actions) for different word-processing activities.Į-learning is a model of apprenticeship which concerns courses for any branches of science (natural or social) with classes supported by electronic means and, lastly, addressing long-distance and in-classroom courses.įor decades, long-distance courses were offered only by TV or correspondence. Furthermore, NVMouse enhances the efficiency of accessing frequently-used application commands by leveraging a data-driven prediction model that can determine what commands the user will most likely access next, given the current 'local' screen-reader context in the document. Specifically, with word processing applications as the representative case study, we designed and developed NVMouse as a concrete manifestation of this repurposing idea, in which the spatially distributed word-processor controls are mapped to a virtual hierarchical 'Feature Menu' that is easily traversable non-visually using simple scroll and click input actions. This paper explores the idea of repurposing visual input modalities for non-visual interaction so that blind users too can draw the benefits of simple and efficient access from these modalities. As a consequence, blind users generally require significantly more time and effort to do even simple application tasks (e.g., applying a style to text in a word processor) using only keyboard, compared to their sighted peers who can effortlessly accomplish the same tasks using a point-and-click mouse. In contrast, blind users are unable to leverage these visual input modalities and are thus limited while interacting with computers using a sequentially narrating screen-reader assistive technology that is coupled to keyboards. Visual 'point-and-click' interaction artifacts such as mouse and touchpad are tangible input modalities, which are essential for sighted users to conveniently interact with computer applications. A user study with nine participants revealed that MagPro significantly reduced the time and workload to do routine command-access tasks, compared to using the state-of-the-art screen magnifier. Informed by the study findings, we developed MagPro, an augmentation to productivity applications, which significantly improves usability by not only bringing application commands as close as possible to the user's current viewport focus, but also enabling easy and straightforward exploration of these commands using simple mouse actions.

In this study, we observed that most usability issues were predominantly due to high spatial separation between main edit area and command ribbons on the screen, as well as the wide span grid-layout of command ribbons these two GUI aspects did not gel with the screen-magnifier interface due to lack of instantaneous WYSIWYG (What You See Is What You Get) feedback after applying commands, given that the participants could only view a portion of the screen at any time. To fill this knowledge gap, we conducted a usability study with 10 low-vision participants having different eye conditions.

Despite the importance of these applications, little is known about their usability with respect to low-vision screen-magnifier users. Many people with low vision rely on screen-magnifier assistive technology to interact with productivity applications such as word processors, spreadsheets, and presentation software.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed